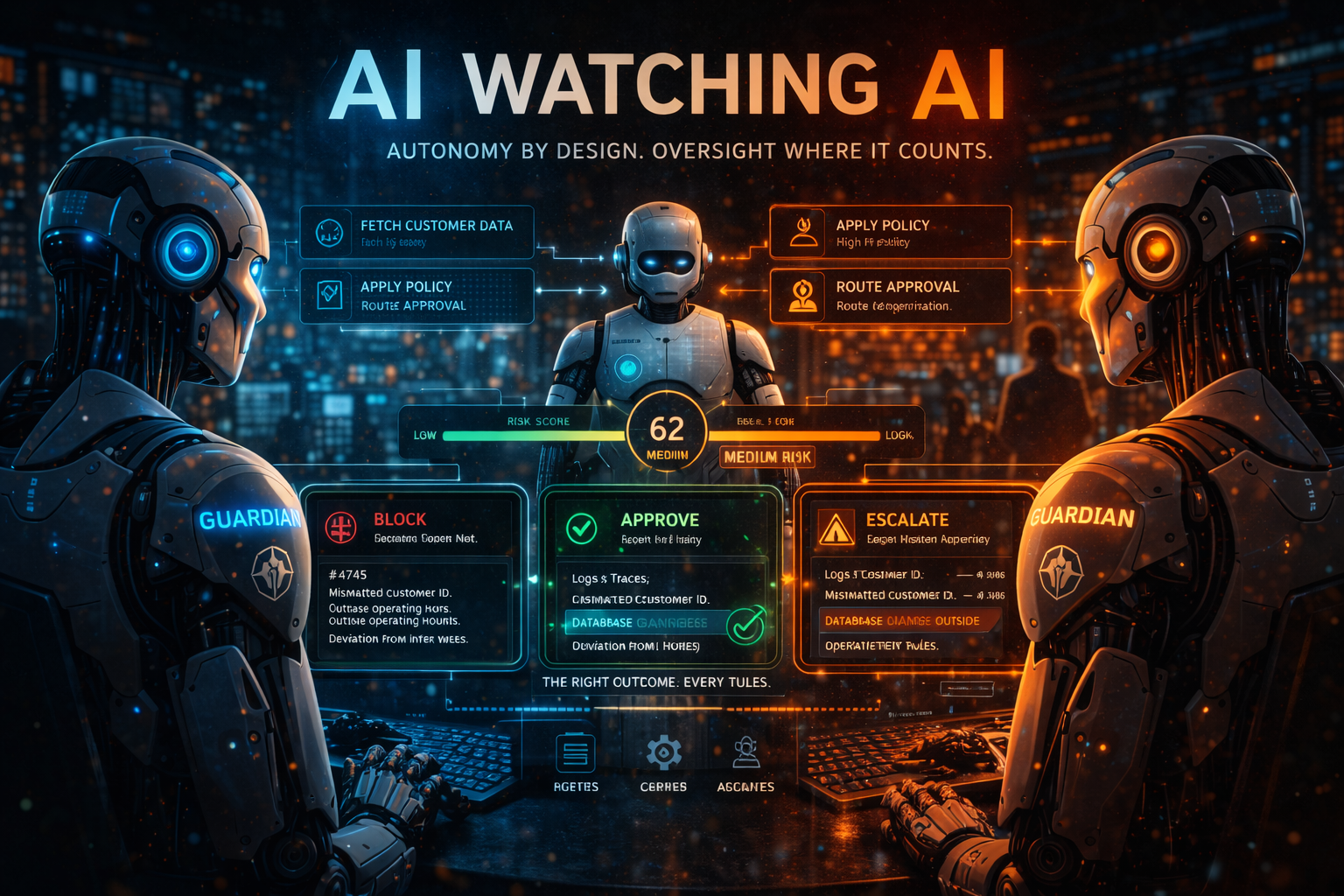

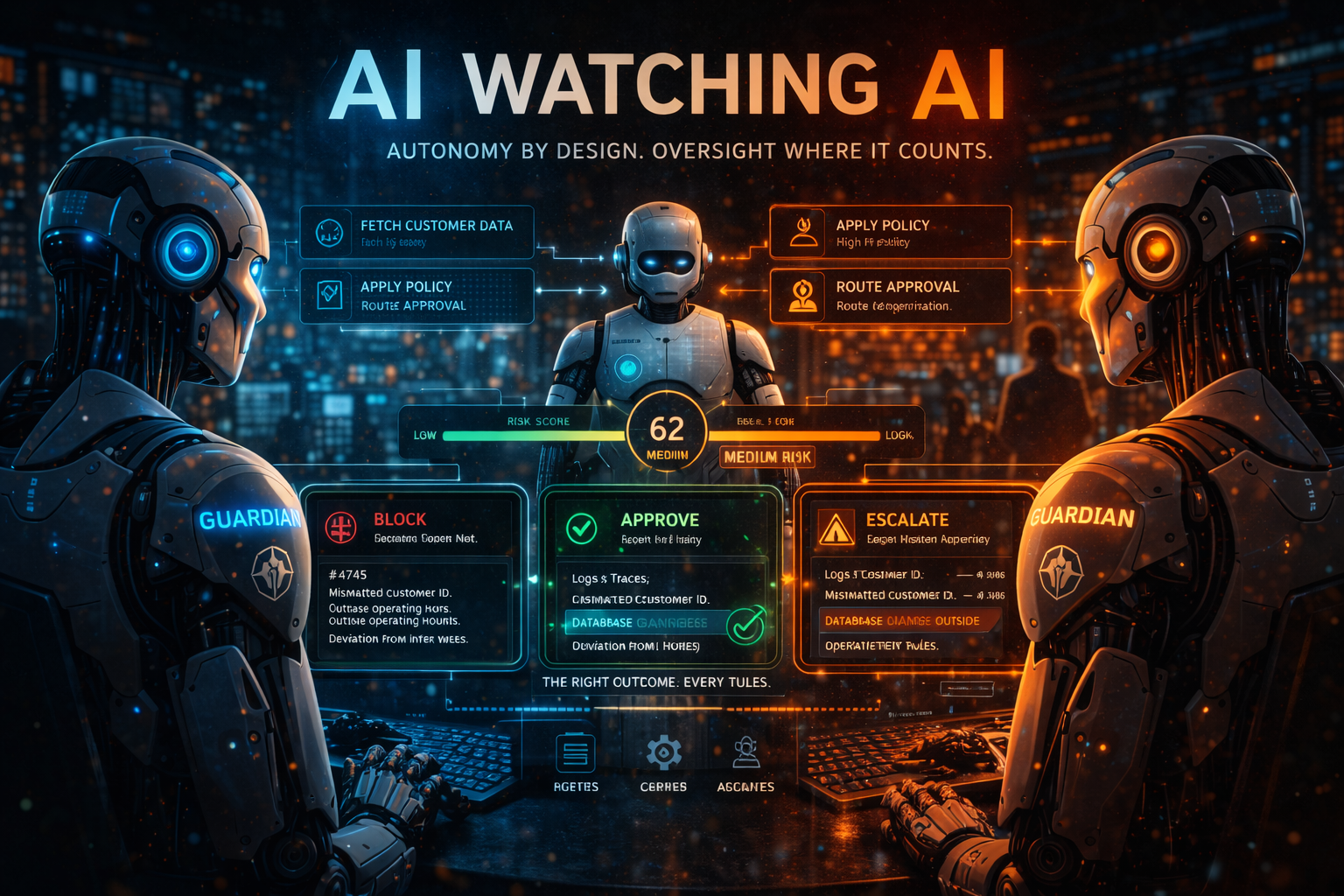

AI Watching AI

At scale, humans can't review every agent interaction. The case for guardian agents — and why AI overseeing AI is uncomfortable but probably inevitable.

8 articles tagged with "AI Governance"

At scale, humans can't review every agent interaction. The case for guardian agents — and why AI overseeing AI is uncomfortable but probably inevitable.

An agent built correctly can still drift into dangerous territory through misconfiguration. Most organizations have no way to detect it until something breaks.

Most AI agents run at whatever autonomy level was easiest to implement, not the one that reflects actual risk. Here's how to tell the difference.

The biggest barrier to real AI automation isn't the model. It's connectivity. And the protocol solving it is creating your next governance problem.

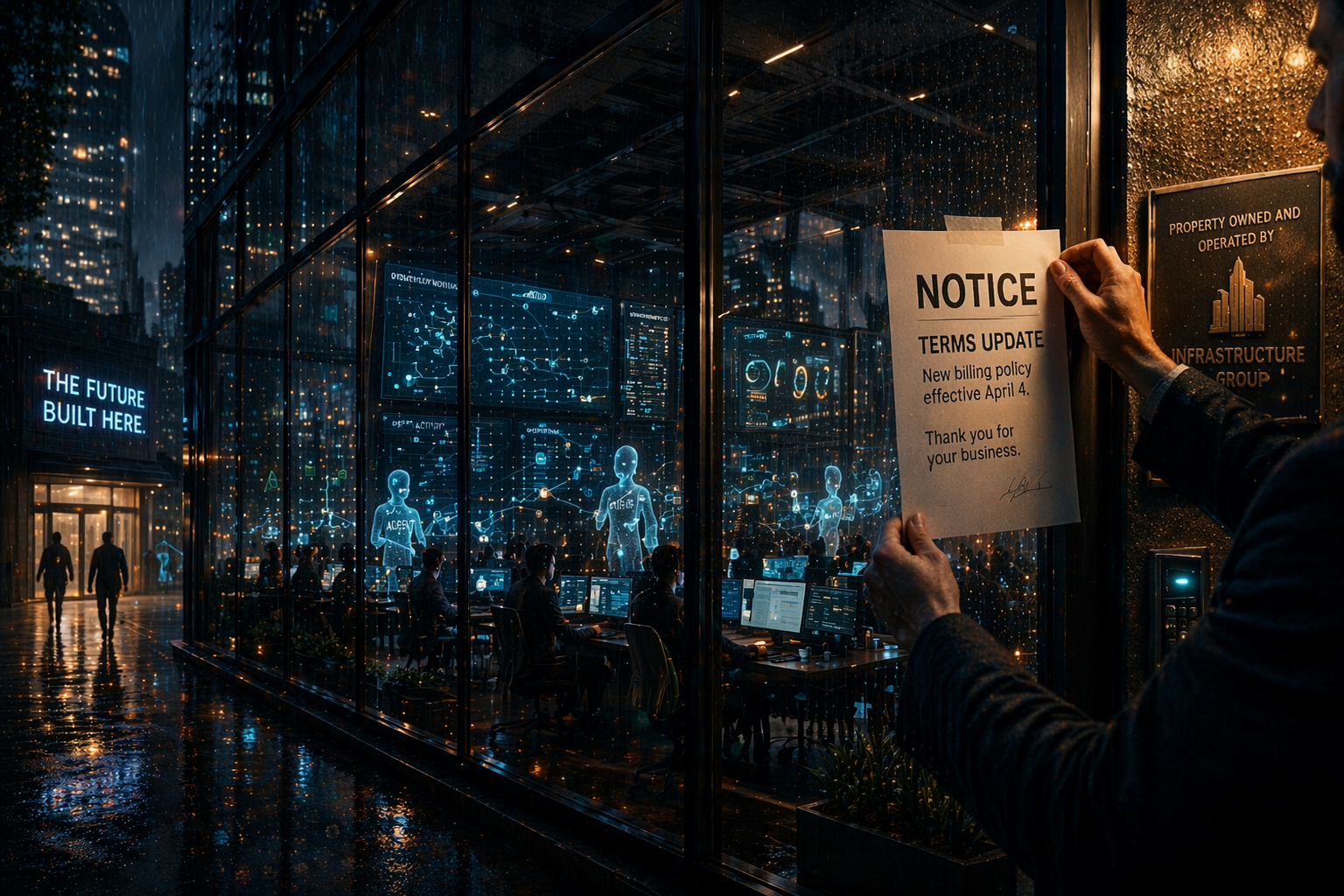

Thousands of AI agent workflows disrupted overnight — not because agents broke, but because a vendor changed its billing. That's a governance failure.

Most organizations badge their contractors, track their access, and revoke it when they leave. They don't do any of it for AI agents. That gap is closing fast.

You can't govern, defend, or prove value from AI systems you can't account for. Why inventory is the first place enterprise AI governance gets real.

Building AI agents for production takes far more than good prompts. Real agent systems need tools, memory, error handling, and organizational trust.